We offer you some tips and articles that are of great help when we face a production and technical doubts arise.

This information is very useful for when you come with a job to our study and there are no surprises, minimizing the problems derived from the lack of control in certain technical parameters.

How to prepare your project to bring it to our studio.

We offer practical guides to prepare your project from

any of the 3 most common editing systems for when you bring an edit to post-production.

FROM AVID PROJECT FROM ADOBE PREMIERE PROJECT FROM FINAL CUT PRO PROJECT

FROM AVID PROJECT FROM ADOBE PREMIERE PROJECT FROM FINAL CUT PRO PROJECT

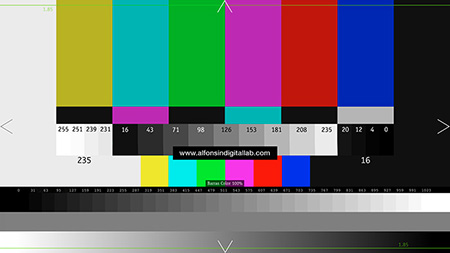

This test chart is very useful

to adjust your monitors well and there are no surprises due to an incorrect viewing.

We can also do a multitude of compression processes or postpo routes with different software to see what modifications this card undergoes and thus control what we have in hand.

I leave you the instructions for the correct calibration of a monitor broadcast (CCIR 601/709). Import the file as "Full Range" if you do it with video levels the measurements will be incorrect.

Click here to download..

The horizontal lines show clipping for 1.85 film.

100% color bars are provided in the bottom-center: White is 235, black 16 and 235 each of the colors. The bars 100% color in PAL would exceed the saturation limits.

The conversion of the files should preserve the super-white area, but some algorithms can remap from 16-235 to 0-255.

A difference of between 255 and 251 has to be appreciated in the form of wave. On a calibrated video monitor, you should see a difference between 231 and 235, but regions above 235 may appear even if clipping occurs.

Black below 16 is not a usable area. Some conversions clip below 16, others compress the signal preserving some information.

On a calibrated video monitor you should see a difference between the 16 and 20, but 16 should look the same as the numbers you have for below (4,8,12).

At the bottom of the chart we have a gray scale of 32 steps, from 0% to 100%. Numeric values indicate RGB levels on a 16-bit scale.

The grayscale will show you gamma corrections applied to the image.

This ramp only has 8 bit resolution.

However, you should be aware that the specifications of both Monitor types vary in different ways: The frame may be cut off on video monitors due to Overscan.

Image may appear interlaced (or frame segmented) on video monitors.

Blacks (setup), gain and gamma may be different.

We can also do a multitude of compression processes or postpo routes with different software to see what modifications this card undergoes and thus control what we have in hand.

I leave you the instructions for the correct calibration of a monitor broadcast (CCIR 601/709). Import the file as "Full Range" if you do it with video levels the measurements will be incorrect.

Click here to download..

Edge crop

Triangles at the edges show the frame boundaries full, this way clipping can be detected on the monitor, the cropping the image in a downconversion or in a files.The horizontal lines show clipping for 1.85 film.

Color bars

Full-size color bars are 75%: White is 235, the black 16 and 180 each of the colors. These bars include a band bottom for saturation adjustment in "blue only" mode of monitors.100% color bars are provided in the bottom-center: White is 235, black 16 and 235 each of the colors. The bars 100% color in PAL would exceed the saturation limits.

Super-white (8 bits units)

Super-white regions show a super-white level (255).The conversion of the files should preserve the super-white area, but some algorithms can remap from 16-235 to 0-255.

A difference of between 255 and 251 has to be appreciated in the form of wave. On a calibrated video monitor, you should see a difference between 231 and 235, but regions above 235 may appear even if clipping occurs.

Super-black (8 bits units)

Super-black regions show a super-black level (0).Black below 16 is not a usable area. Some conversions clip below 16, others compress the signal preserving some information.

On a calibrated video monitor you should see a difference between the 16 and 20, but 16 should look the same as the numbers you have for below (4,8,12).

Grayscale

In the middle, there is an 8-step gray scale, from 0% to 100%. Numeric values indicate RGB levels on an 8-bit scale.At the bottom of the chart we have a gray scale of 32 steps, from 0% to 100%. Numeric values indicate RGB levels on a 16-bit scale.

The grayscale will show you gamma corrections applied to the image.

B/W ramps

The black and white ramp found at the bottom of the image, it can detect histogram defects (posterization) due to color correction.This ramp only has 8 bit resolution.

considerations with monitors

Some aspects of the test chart can be detected by the eye on both video monitors and calibrated computer monitors.However, you should be aware that the specifications of both Monitor types vary in different ways:

The ACES system has been developed by the Hollywood academy and is the best way to manage color in digital cinematography. It allows to work the image of any digital camera or photochemical scanner in a common space and process it to be able to come out in any of the existing standards in a simple and direct way.

The academy has published some quick start guides and you can download them here.

ACES OVERVIEW SUMMARY DP Quick Start Guide DIT Quick Start Guide VFX Quick Start Guide ACES WORKFLOW SAMPLE

The academy has published some quick start guides and you can download them here.

ACES OVERVIEW SUMMARY DP Quick Start Guide DIT Quick Start Guide VFX Quick Start Guide ACES WORKFLOW SAMPLE

A KODAK-edited classic of filmmaking, this book is where you can find technical information about photometers, cameras, lighting, film selection, post-production, and workflows in an easy-to-read and easy-to-use format.

The Essential Reference Guide for Filmmakers

The Essential Reference Guide for Filmmakers

DSLR cameras like the Canon 5D or Nikon D800 and the new AVCHD video cameras are commonly used in low-cost productions since the cost / quality ratio is quite good. The workflow with them is a bit particular if we want to use their footage in processes where we have to use several tools.

The first thing to consider is the format of the files and their codec. These are usually H264 files with interframe compression. For post-production, these files have many problems and the first thing we have to do is convert them to a codec with intraframe compression, which has much higher quality and supports more processes without degradation. The ideal codecs for this are Apple ProRes and Avid DnxHD.

Another problem with these files is the absence of a TimeCode track and metadata that assigns a “reel” value. In EDLs shaping processes between different post-production tools, the “reel” information and the time codes are crucial so that there is not the minimum identification error of the footage in addition to the precision of the cuts.

If you are going to work with these cameras and you are coming to finish your project in our studio, we recommend using the software Shutter Encoder, for example. This tool is free (you can make a donation) and allows you to quickly and easily convert the original camera files into other files with codec for post-production, adding TC track, additional metadata and batch renaming of the files.

The first thing to consider is the format of the files and their codec. These are usually H264 files with interframe compression. For post-production, these files have many problems and the first thing we have to do is convert them to a codec with intraframe compression, which has much higher quality and supports more processes without degradation. The ideal codecs for this are Apple ProRes and Avid DnxHD.

Another problem with these files is the absence of a TimeCode track and metadata that assigns a “reel” value. In EDLs shaping processes between different post-production tools, the “reel” information and the time codes are crucial so that there is not the minimum identification error of the footage in addition to the precision of the cuts.

If you are going to work with these cameras and you are coming to finish your project in our studio, we recommend using the software Shutter Encoder, for example. This tool is free (you can make a donation) and allows you to quickly and easily convert the original camera files into other files with codec for post-production, adding TC track, additional metadata and batch renaming of the files.

There are a lot of formats

and we always ask ourselves a lot of questions about them.

Here you have a Creative Commons table in PDF, where they are practically all. It has its time and some codec has undergone updates and there are even new ones, but as a reference it is fine.

FORMATS AND CODECS CHART

Here you have a Creative Commons table in PDF, where they are practically all. It has its time and some codec has undergone updates and there are even new ones, but as a reference it is fine.

FORMATS AND CODECS CHART

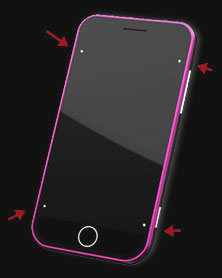

When we make compositions, and the

camera or object where new elements are embedded are in

movement, it is important to have good tracking points so that

the final fusion of all the elements is optimal.

Here is a list of tips for those points to be recorded from the better way and I don't know become a problem at the same time that they stop doing their job.

A good point for tracking is the one that has the detail well defined of the pixel, such as in a corner and not in a curve of a circle.

It is important to try to preserve the reflection of the screen (if any) so that in postpo we can apply it on the final composition. For it is best to place a few points as small as possible, to later facilitate its deletion. If the tracking marks are large, we will lose a large area of the screen and we will not be able to use it for the highlights layer.

In case we use a chroma background and pass a hand over front, for example, we will have to be careful that the hand does not cover the points, because losing them we will have problems calculating perfect prospects. As between the corners and the black frame of the screen there is a good contrast ratio, in some cases, it is We may be able to do a good tracking analysis without having to place dots on the chroma.

The ideal way to compose with tracking points is to use a Mocha-like 3D tracking tool, for example, that will make a analysis of the points on the image very good in all axes and it will respect all perspective, scale and position information.

When there are camera movements in hand or on a steady they are very Useful mic feet with labels as tracking points. Of this way we will have points that define the space very well three-dimensional and with the help of a 3D tracking tool we can extract the necessary information to later compose an image with great effectiveness.

Here is a list of tips for those points to be recorded from the better way and I don't know become a problem at the same time that they stop doing their job.

Main characteristics of a tracking point.

A good point for tracking is the one that has the detail well defined of the pixel, such as in a corner and not in a curve of a circle.

Good tracking points.

Bad tracking points.

Traking on screens.

Place tracking points on screens to embed images in them is something very common in many productions and we must do it to make integration as realistic as possible.It is important to try to preserve the reflection of the screen (if any) so that in postpo we can apply it on the final composition. For it is best to place a few points as small as possible, to later facilitate its deletion. If the tracking marks are large, we will lose a large area of the screen and we will not be able to use it for the highlights layer.

In case we use a chroma background and pass a hand over front, for example, we will have to be careful that the hand does not cover the points, because losing them we will have problems calculating perfect prospects. As between the corners and the black frame of the screen there is a good contrast ratio, in some cases, it is We may be able to do a good tracking analysis without having to place dots on the chroma.

The ideal way to compose with tracking points is to use a Mocha-like 3D tracking tool, for example, that will make a analysis of the points on the image very good in all axes and it will respect all perspective, scale and position information.

Tracking with perspectives.

When we make compositions with chromas and we need the information of depth to maintain perspectives and scales, use a minimum of 4 points and place them so that they define the space three-dimensional. Here the points made with a cross are the best option.When there are camera movements in hand or on a steady they are very Useful mic feet with labels as tracking points. Of this way we will have points that define the space very well three-dimensional and with the help of a 3D tracking tool we can extract the necessary information to later compose an image with great effectiveness.

MXF (Material Exchange Format) is

a file format aimed at

exchange of audiovisual material with associated metadata between

different applications. Its technical characteristics are defined in

the SMPTE 377M standard and was developed by the Pro-MPEG Forum, the

EBU organization and the AAF association, together with the main

companies and manufacturers in the broadcast industry. The ultimate goal is

an open file format that facilitates video sharing,

audio, data and associated metadata within a workflow based

in files.

An MXF file works as a container that can carry video, audio, graphics, etc. and its associated metadata, in addition to the necessary information that makes up the structure of the file. A factor important is that MXF is independent of the compression format used as it can transport different types of format such as MPEG, DV or a sequence of TIFFs. The great advantage of MXF is that allows you to save and exchange associated metadata, which describes the content and the way the file should be read.

Metadata can contain information about:

The file structure

The content itself (MPEG, DV, ProRes, DnxHD, JPG, PCM, etc.)

Time code

Keywords or titles

Subtitles

Edition notes

Date and version number of a clip -Etc.

MXF is based on the AAF (Advanced Authoring Format) data model and are complementary to each other. The difference between this format and MXF is that the AAF format is optimized for post-production processes, because it allows to store a greater wealth of metadata and because makes it possible to use references to external materials. MXF files can be embedded within AAF files, this means that a AAF project can include audiovisual content and metadata partners, but you can also call other MXF content hosted from external form.

Achieving interoperability is the primary goal of MXF and is establish three areas:

-Multi platform. It will work through different network protocols and operating systems, including Windows, Mac OS, Unix, and Linux.

-Independent compression. Do not convert between compression formats, makes it easier to handle more than one native format. Can handle the uncompressed video.

-Transfer in streaming. MXF interacts seamlessly with bi-directional streaming media. SDTI is an example of file-based streaming that works seamlessly with this Format. Transmission over IP networks is also optimal.

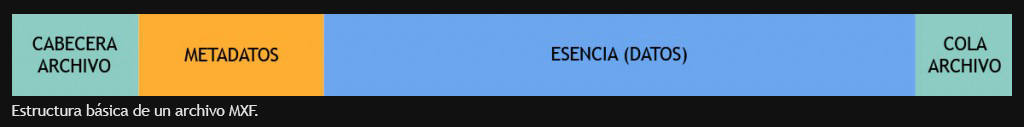

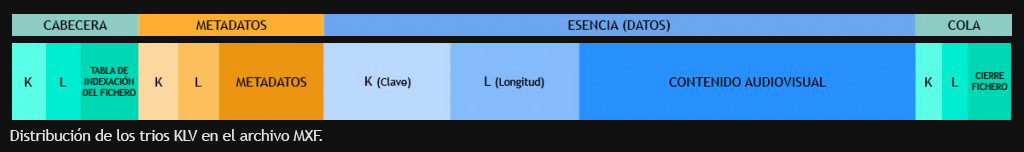

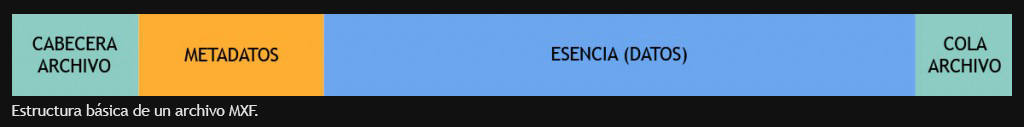

An MXF file has a structure that houses a file header where the content of the file and its synchronization are detailed, the metadata associated with multimedia, the body that contains the essence of the original media data and the queue that closes the file.

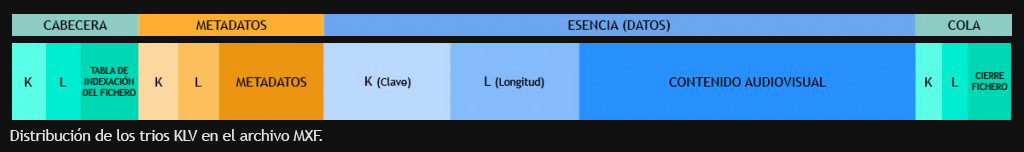

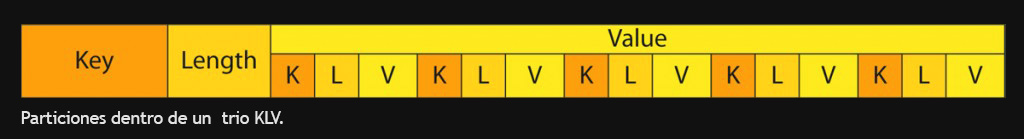

The data contained in MXF files is stored using a subdivision into a trio of KLV (Key-Length-Value) values. This is a unique identification key (key) of 16 bytes for each trio, the value of the length (length) of the data stored in that triplet and the data itself (value). This way of organizing data allows locate any specific element within the MXF file, with so just read the keys. This structure also allows the file can grow and add new features with new compression techniques and metadata schemes that are defined.

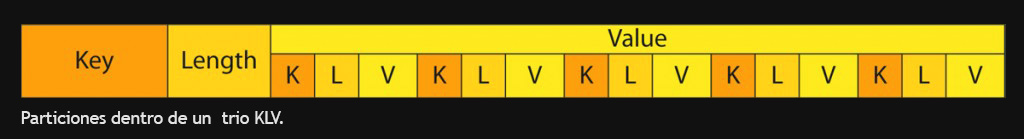

We can curl the curl a little more and it is that they are allowed partitions within a KLV trio. This is that the data of a trio can be fragmented into a succession of KLV trios and gives you more robustness to the file structure. This has an advantage, for For example, in the transmission of MXF files over networks, where if we lose the connection and the MXF transfer is cut off, recover it, it will not be necessary to send the entire file again since that we can hook into the trio where the transfer broke.

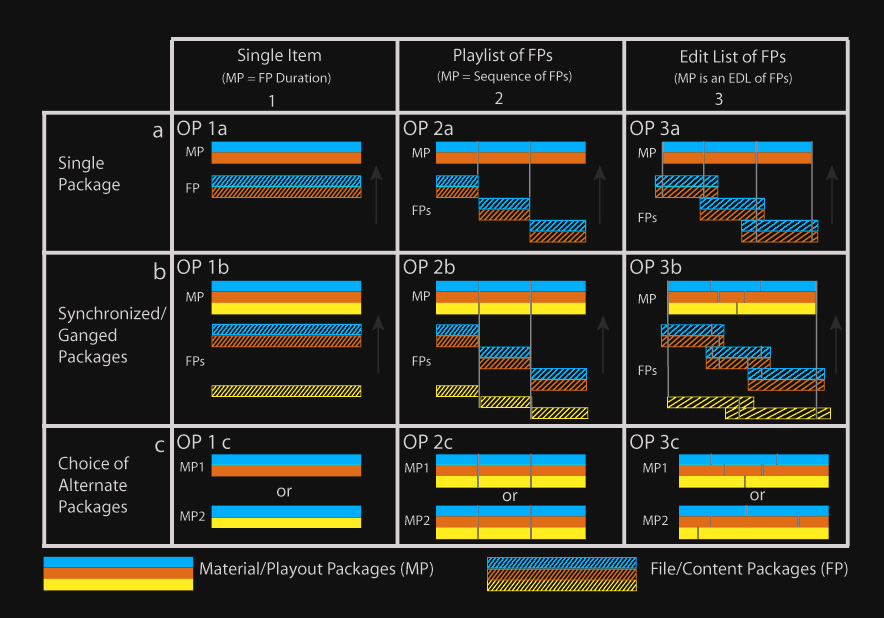

So far I suppose that you all have it quite clear and you will ask why an MXF that generates an XDCAM camera is not compatible with that generated by a P2 if the MXF format was created to ensure compatibility. Well, the great flexibility of the MXF allows different interpretations and applications of the standard by the different manufacturers, and this is how the MXFs that generate the products of each are not compatible with each other. This has led to implement a number of different physical versions to improve the interoperability based on your applications. In this way establish the so-called Operational Patterns and each one will have its own specifications under its own standard that will define the type of image / sound containing the essence and structure of the metadata. Of these patterns, OP-1a and OP-Atom are the ones that we find most often.

The generic operational pattern is OP-1a and was created as a replacement for the videotape, where a single MXF file contains video, multiple audio channels and time codes. It is very simple and flexible but it has many limitations as to what you have to work on. An example in this case would be the XDCAM format or JVC cameras that they record on cards, where each of their clips have a single file with video and audio.

OP-Atom is a very simple file format that you can only have in your essentially a single item, be it a video or audio track. For the In general, the metadata linked to the media contained in the MXF OP-Atom it is in AAF or XML files. A typical example is the P2 format, where video and audio tracks are wrapped in separate MXF-Atom files and the metadata that associates them goes in a separate XML file.

The media files that AVID generates are also OP-Atom and its metadata associate is in AAF. This is the most used in environments of edition, where individual access to the audiovisual components. In the case of AVID, these files have the particularity of incorporating non-standard MXF metadata that use the applications of this manufacturer to index them and that can create incompatibility problems if exchanged with others systems other than AVID.

In the case of digital cinema, the MXFs that carry the image and audio they are also OP-Atom. In this case they are restricted to compression and specific color space for this function (JPEG 2000 and XYZ) in the for video and 16 PCM tracks for audio, so your compatibility outside of this environment is very limited. Your timing and metadata is the information that XML files carry.

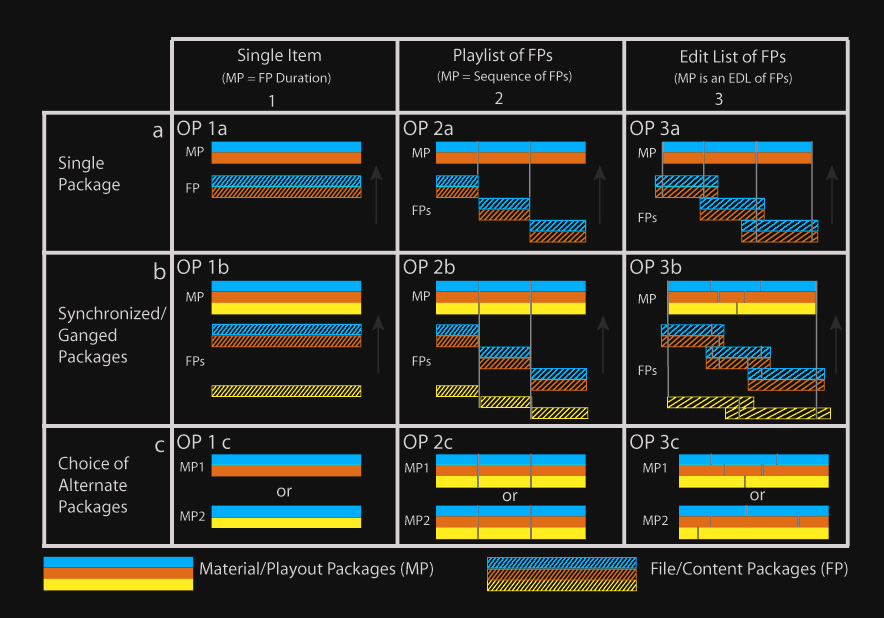

We can establish a group of operation patterns (OP) with various levels of complexity where the OP-1a pattern is the most low. A single continuous track with video, audio, and metadata packed into a file is what defines the OP-1a pattern. Level more complex within these patterns is OP-3c, with the combination multiple clips (packed files) with multiple playlists playback (playlist) combined. Here you have a table with them.

The AMWA Association (Advanced Media Workflow Association) is created to lead the development and promotion of the use of standards and technologies that enable more workflows effective for the use of network media. Her current projects are the advance in the use of AAF, BXF, MXF and XML formats in the luxuries of work based on data files. This association works in close collaboration with SMPTE and other standards bodies. Among his projects is one that refers to MXF files and defines a set of rules that limit the MXF specification of face to the adaptation of this format to different applications and flows of work.

AMWA specifications can be summarized as follows list:

AS-02 - MXF with mastering versions. This was

developed for

support the storage and management of the components of a program

in MXF, to allow versions, multi-languages and deliveries to the

different media. It is a file package that has

all the necessary elements of video, audio and metadata to generate

multiple versions of a product. Who should use it? Environments

post-production, broadcasters and distributors of

contents.

AS-03 - Delivery of programs. This MXF specification is

optimized

for the distribution of the program and its direct broadcast from a

video server. It is a single file that incorporates audio, video and

metadata for a single program. Who should use it? Networks

broadcasters.

AS-10 - MXF for Production. The specification of the

application

AS-10 is aimed at establishing a common MXF file format for

entire production workflow, including recording to

camera, ingesting to a server, editing, playback,

digital distribution and archiving.

An example is the use of MPEG Long-GOP. The project includes the

development of an application to validate files as an aid

for quality control processes. Who should use it?

Production and post-production, broadcasters and

content distributors.

AS-11 - MXF for redistribution. This is a format of

MXF files

for the delivery of finished products from stations

dissemination of the creators of the programs. AS-11 includes the

functionality of the AS-03 and is extended to include AVC-Intra 100, and

includes support for standard definition D-10 video standard with

AES3 audio. AS-11 defines a minimal basic metadata set, and a

metadata schema with program segmentation. Who owes it

use? Broadcasters, and distributors of

contents.

AS-12 - Commercial Delivery. AS-12 is a subset of

files in

MXF format for delivery of finished advertisements to stations

television or broadcast networks. The specification provides a

clapperboard and other metadata to associate with the essence of the sound and

of the video. AS-12 establishes that the explicit identification of

content is made assisted by a computer and that it will control

the playlist. Who should use it? Production and

post production, commercial distribution, broadcasters

and cable networks.

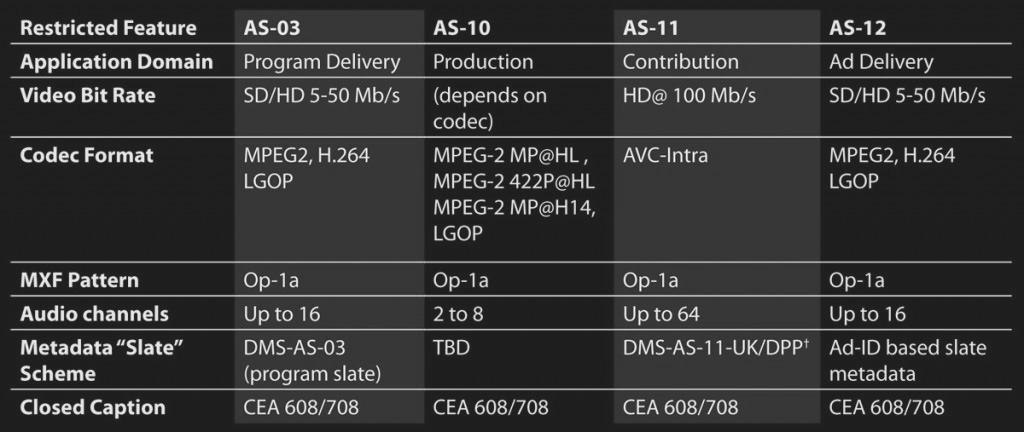

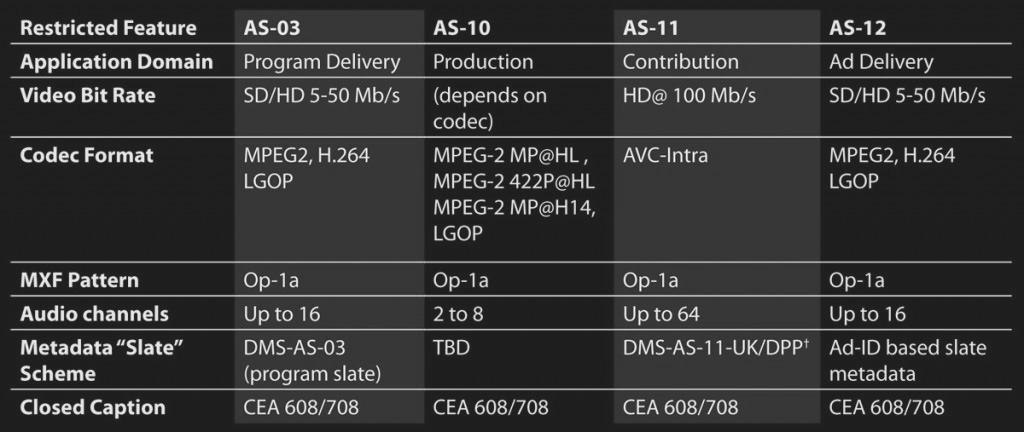

The main characteristics of these MXF are in the following table, AS-02 does not appear because it has not yet definitively closed its development process.

And speaking of these formats, AVID since version 7 of the MediaComposer supports these formats. A new AMA (Avid Media Access) component called Avid Media Authoring we allows users to deliver and archive multiple formats of output, among them are the AS-02 and AS-11.

These formats are the support for the delivery of our work in content broadcasting and distribution centers on a regular basis. SMPTE has also approved as a standard the IMF (Interoperable Master Format) format with a structure very similar to that of the DCP (Digital Cinema Package) but designed for the broadcast world, and that NETFLIX uses for the deliveries it receives.

An MXF file works as a container that can carry video, audio, graphics, etc. and its associated metadata, in addition to the necessary information that makes up the structure of the file. A factor important is that MXF is independent of the compression format used as it can transport different types of format such as MPEG, DV or a sequence of TIFFs. The great advantage of MXF is that allows you to save and exchange associated metadata, which describes the content and the way the file should be read.

Metadata can contain information about:

MXF is based on the AAF (Advanced Authoring Format) data model and are complementary to each other. The difference between this format and MXF is that the AAF format is optimized for post-production processes, because it allows to store a greater wealth of metadata and because makes it possible to use references to external materials. MXF files can be embedded within AAF files, this means that a AAF project can include audiovisual content and metadata partners, but you can also call other MXF content hosted from external form.

Achieving interoperability is the primary goal of MXF and is establish three areas:

-Multi platform. It will work through different network protocols and operating systems, including Windows, Mac OS, Unix, and Linux.

-Independent compression. Do not convert between compression formats, makes it easier to handle more than one native format. Can handle the uncompressed video.

-Transfer in streaming. MXF interacts seamlessly with bi-directional streaming media. SDTI is an example of file-based streaming that works seamlessly with this Format. Transmission over IP networks is also optimal.

An MXF file has a structure that houses a file header where the content of the file and its synchronization are detailed, the metadata associated with multimedia, the body that contains the essence of the original media data and the queue that closes the file.

The data contained in MXF files is stored using a subdivision into a trio of KLV (Key-Length-Value) values. This is a unique identification key (key) of 16 bytes for each trio, the value of the length (length) of the data stored in that triplet and the data itself (value). This way of organizing data allows locate any specific element within the MXF file, with so just read the keys. This structure also allows the file can grow and add new features with new compression techniques and metadata schemes that are defined.

We can curl the curl a little more and it is that they are allowed partitions within a KLV trio. This is that the data of a trio can be fragmented into a succession of KLV trios and gives you more robustness to the file structure. This has an advantage, for For example, in the transmission of MXF files over networks, where if we lose the connection and the MXF transfer is cut off, recover it, it will not be necessary to send the entire file again since that we can hook into the trio where the transfer broke.

So far I suppose that you all have it quite clear and you will ask why an MXF that generates an XDCAM camera is not compatible with that generated by a P2 if the MXF format was created to ensure compatibility. Well, the great flexibility of the MXF allows different interpretations and applications of the standard by the different manufacturers, and this is how the MXFs that generate the products of each are not compatible with each other. This has led to implement a number of different physical versions to improve the interoperability based on your applications. In this way establish the so-called Operational Patterns and each one will have its own specifications under its own standard that will define the type of image / sound containing the essence and structure of the metadata. Of these patterns, OP-1a and OP-Atom are the ones that we find most often.

The generic operational pattern is OP-1a and was created as a replacement for the videotape, where a single MXF file contains video, multiple audio channels and time codes. It is very simple and flexible but it has many limitations as to what you have to work on. An example in this case would be the XDCAM format or JVC cameras that they record on cards, where each of their clips have a single file with video and audio.

OP-Atom is a very simple file format that you can only have in your essentially a single item, be it a video or audio track. For the In general, the metadata linked to the media contained in the MXF OP-Atom it is in AAF or XML files. A typical example is the P2 format, where video and audio tracks are wrapped in separate MXF-Atom files and the metadata that associates them goes in a separate XML file.

The media files that AVID generates are also OP-Atom and its metadata associate is in AAF. This is the most used in environments of edition, where individual access to the audiovisual components. In the case of AVID, these files have the particularity of incorporating non-standard MXF metadata that use the applications of this manufacturer to index them and that can create incompatibility problems if exchanged with others systems other than AVID.

In the case of digital cinema, the MXFs that carry the image and audio they are also OP-Atom. In this case they are restricted to compression and specific color space for this function (JPEG 2000 and XYZ) in the for video and 16 PCM tracks for audio, so your compatibility outside of this environment is very limited. Your timing and metadata is the information that XML files carry.

We can establish a group of operation patterns (OP) with various levels of complexity where the OP-1a pattern is the most low. A single continuous track with video, audio, and metadata packed into a file is what defines the OP-1a pattern. Level more complex within these patterns is OP-3c, with the combination multiple clips (packed files) with multiple playlists playback (playlist) combined. Here you have a table with them.

The AMWA Association (Advanced Media Workflow Association) is created to lead the development and promotion of the use of standards and technologies that enable more workflows effective for the use of network media. Her current projects are the advance in the use of AAF, BXF, MXF and XML formats in the luxuries of work based on data files. This association works in close collaboration with SMPTE and other standards bodies. Among his projects is one that refers to MXF files and defines a set of rules that limit the MXF specification of face to the adaptation of this format to different applications and flows of work.

AMWA specifications can be summarized as follows list:

The main characteristics of these MXF are in the following table, AS-02 does not appear because it has not yet definitively closed its development process.

And speaking of these formats, AVID since version 7 of the MediaComposer supports these formats. A new AMA (Avid Media Access) component called Avid Media Authoring we allows users to deliver and archive multiple formats of output, among them are the AS-02 and AS-11.

These formats are the support for the delivery of our work in content broadcasting and distribution centers on a regular basis. SMPTE has also approved as a standard the IMF (Interoperable Master Format) format with a structure very similar to that of the DCP (Digital Cinema Package) but designed for the broadcast world, and that NETFLIX uses for the deliveries it receives.